New publication at ESWC 2026: Fully geometric multi-hop reasoning on knowledge graphs with transitive relations

A new paper by Fernando Zhapa-Camacho and Robert Hoehndorf, presented at ESWC 2026, introduces GeometrE, a fully geometric box-embedding method that performs multi-hop reasoning on knowledge graphs without neural components for logical operations, and preserves transitive relations through an idempotency-enforcing loss.

About

The Bio-Ontology Research Group presented "Fully Geometric Multi-Hop Reasoning on Knowledge Graphs with Transitive Relations" at the 23rd Extended Semantic Web Conference (ESWC 2026). The paper, by Fernando Zhapa-Camacho and Robert Hoehndorf, introduces GeometrE, a geometric embedding method for multi-hop query answering on knowledge graphs that, unlike previous geometric methods, does not require any neural components to learn the logical operations.

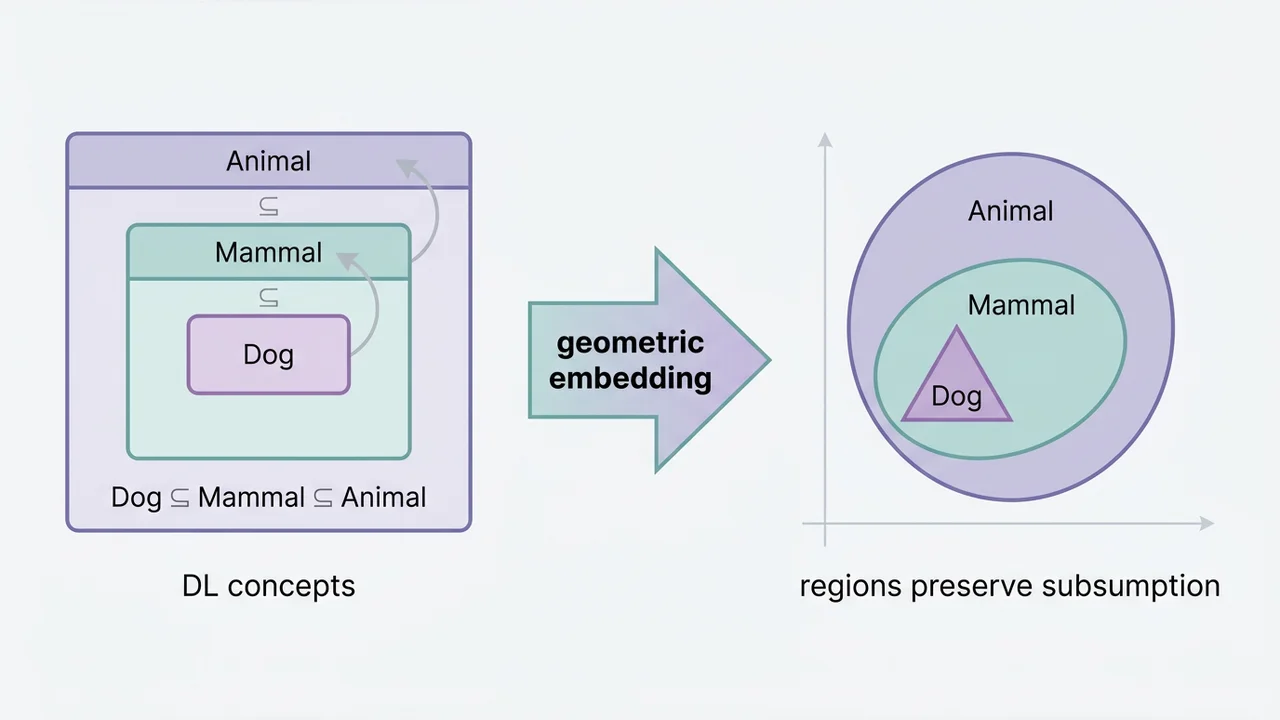

Existing geometric query-answering methods such as Query2Box represent queries as closed regions (boxes, cones, or probability distributions) and rely on neural networks to learn operations such as intersection and relation projection. This makes the embeddings only partially interpretable, since the logical operators themselves are hidden inside a learned model. GeometrE removes this dependency: every logical operation, intersection, negation and relation projection, is mapped to a pure geometric transformation that can be inspected directly. Intersection is the coordinate-wise max of lower corners and min of upper corners; relation projection is a four-parameter transformation on box centres and offsets; negation is approximated by leveraging the fact that the probability of two boxes overlapping decreases exponentially with dimensionality.

To handle transitive relations such as part_of or hierarchical predicates, where r(a,b) ∧ r(b,c) → r(a,c) should hold, the paper introduces two contributions. First, a transitive loss function regularises the relation embedding so that the relation projection becomes idempotent (Tr(Tr(B)) = Tr(B)). Second, an ordering-preserving distance constraint along a chosen "transitive dimension" enforces a strict ordering of entities along that axis, which the paper proves is sufficient to guarantee that the inferred triple r(a,c) is recovered by the embedding even when it is not explicitly present in the training set.

The method is evaluated on standard multi-hop reasoning benchmarks (WN18RR-QA, NELL-QA, FB15k-237) across nine query types including conjunctions, disjunctions and negations, and shows consistent improvements over Query2Box, ConE and related baselines, with particularly large gains on queries involving transitive relations.

The paper is published in the ESWC 2026 proceedings (Springer LNCS); DOI: 10.1007/978-3-032-25156-5_14.